1. How to debug when SQL can not be executed rightly in ShardingSphere?

Answer:

sql.show configuration is provided in ShardingSphere-Proxy and post-1.5.0 version of ShardingSphere-JDBC, enabling the context parsing, rewritten SQL and the routed data source printed to info log. sql.show configuration is off in default, and users can turn it on in configurations.

A Tip: Property sql.show has changed to sql-show in version 5.x.

2. Why do some compiling errors appear? Why did not the IDEA index the generated codes?

Answer:

ShardingSphere uses lombok to enable minimal coding. For more details about using and installment, please refer to the official website of lombok.

The codes under the package org.apache.shardingsphere.sql.parser.autogen are generated by ANTLR. You may execute the following command to generate codes:

./mvnw -Dcheckstyle.skip=true -Drat.skip=true -Dmaven.javadoc.skip=true -Djacoco.skip=true -DskipITs -DskipTests install -T1C

The generated codes such as org.apache.shardingsphere.sql.parser.autogen.PostgreSQLStatementParser may be too large to be indexed by the IDEA.

You may configure the IDEA’s property idea.max.intellisense.filesize=10000.

3. Why is xsd unable to be found when Spring Namespace is used?

Answer:

The use norm of Spring Namespace does not require to deploy xsd files to the official website. But considering some users’ needs, we will deploy them to ShardingSphere’s official website.

Actually, META-INF\spring.schemas in the jar package of shardingsphere-jdbc-spring-namespace has been configured with the position of xsd files: META-INF\namespace\sharding.xsd and META-INF\namespace\replica-query.xsd, so you only need to make sure that the file is in the jar package.

4. How to solve Cloud not resolve placeholder … in string value … error?

Answer:

${...} or $->{...} can be used in inline expression identifiers, but the former one clashes with place holders in Spring property files, so $->{...} is recommended to be used in Spring as inline expression identifiers.

5. Why does float number appear in the return result of inline expression?

Answer:

The division result of Java integers is also integer, but in Groovy syntax of inline expression, the division result of integers is float number. To obtain integer division result, A/B needs to be modified as A.intdiv(B).

6. If sharding database is partial, should tables without sharding database & table be configured in sharding rules?

Answer:

Yes. ShardingSphere merges multiple data sources to a united logic data source. Therefore, for the part without sharding database or table, ShardingSphere can not decide which data source to route to without sharding rules. However, ShardingSphere has provided two options to simplify configurations.

Option 1: configure default-data-source. All the tables in default data sources need not to be configured in sharding rules. ShardingSphere will route the table to the default data source when it cannot find sharding data source.

Option 2: isolate data sources without sharding database & table from ShardingSphere; use multiple data sources to process sharding situations or non-sharding situations.

7. In addition to internal distributed primary key, does ShardingSphere support other native auto-increment keys?

Answer:

Yes. But there is restriction to the use of native auto-increment keys, which means they cannot be used as sharding keys at the same time.

Since ShardingSphere does not have the database table structure and native auto-increment key is not included in original SQL, it cannot parse that field to the sharding field. If the auto-increment key is not sharding key, it can be returned normally and is needless to be cared. But if the auto-increment key is also used as sharding key, ShardingSphere cannot parse its sharding value, which will make SQL routed to multiple tables and influence the rightness of the application.

The premise for returning native auto-increment key is that INSERT SQL is eventually routed to one table. Therefore, auto-increment key will return zero when INSERT SQL returns multiple tables.

8. When generic Long type SingleKeyTableShardingAlgorithm is used, why doesClassCastException: Integer can not cast to Long exception appear?

Answer:

You must make sure the field in database table consistent with that in sharding algorithms. For example, the field type in database is int(11) and the sharding type corresponds to genetic type is Integer, if you want to configure Long type, please make sure the field type in the database is bigint.

9. In SQLSever and PostgreSQL, why does the aggregation column without alias throw exception?

Answer:

SQLServer and PostgreSQL will rename aggregation columns acquired without alias, such as the following SQL:

SELECT SUM(num), SUM(num2) FROM tablexxx;

Columns acquired by SQLServer are empty string and (2); columns acquired by PostgreSQL are empty sum and sum(2). It will cause error because ShardingSphere is unable to find the corresponding column.

The right SQL should be written as:

SELECT SUM(num) AS sum_num, SUM(num2) AS sum_num2 FROM tablexxx;

10. Why does Oracle database throw “Order by value must implements Comparable” exception when using Timestamp Order By?

Answer:

There are two solutions for the above problem: 1. Configure JVM parameter “-oracle.jdbc.J2EE13Compliant=true” 2. Set System.getProperties().setProperty(“oracle.jdbc.J2EE13Compliant”, “true”) codes in the initialization of the project.

Reasons:

org.apache.shardingsphere.sharding.merge.dql.orderby.OrderByValue#getOrderValues():

private List<Comparable<?>> getOrderValues() throws SQLException {

List<Comparable<?>> result = new ArrayList<>(orderByItems.size());

for (OrderByItem each : orderByItems) {

Object value = queryResult.getValue(each.getIndex(), Object.class);

Preconditions.checkState(null == value || value instanceof Comparable, "Order by value must implements Comparable");

result.add((Comparable<?>) value);

}

return result;

}

After using resultSet.getObject(int index), for TimeStamp oracle, the system will decide whether to return java.sql.TimeStamp or define oralce.sql.TIMESTAMP according to the property of oracle.jdbc.J2EE13Compliant. See oracle.jdbc.driver.TimestampAccessor#getObject(int var1) method in ojdbc codes for more detail:

Object getObject(int var1) throws SQLException {

Object var2 = null;

if(this.rowSpaceIndicator == null) {

DatabaseError.throwSqlException(21);

}

if(this.rowSpaceIndicator[this.indicatorIndex + var1] != -1) {

if(this.externalType != 0) {

switch(this.externalType) {

case 93:

return this.getTimestamp(var1);

default:

DatabaseError.throwSqlException(4);

return null;

}

}

if(this.statement.connection.j2ee13Compliant) {

var2 = this.getTimestamp(var1);

} else {

var2 = this.getTIMESTAMP(var1);

}

}

return var2;

}

11. Why is the database sharding result not correct when using Proxool?

Answer:

When using Proxool to configure multiple data sources, each one of them should be configured with alias. It is because Proxool would check whether existing alias is included in the connection pool or not when acquiring connections, so without alias, each connection will be acquired from the same data source.

The followings are core codes from ProxoolDataSource getConnection method in Proxool:

if(!ConnectionPoolManager.getInstance().isPoolExists(this.alias)) {

this.registerPool();

}

For more alias usages, please refer to Proxool official website.

12. Why are the default distributed auto-augment key strategy provided by ShardingSphere not continuous and most of them end with even numbers?

Answer:

ShardingSphere uses snowflake algorithms as the default distributed auto-augment key strategy to make sure unrepeated and decentralized auto-augment sequence is generated under the distributed situations. Therefore, auto-augment keys can be incremental but not continuous.

But the last four numbers of snowflake algorithm are incremental value within one millisecond. Thus, if concurrency degree in one millisecond is not high, the last four numbers are likely to be zero, which explains why the rate of even end number is higher.

In 3.1.0 version, the problem of ending with even numbers has been totally solved, please refer to: https://github.com/apache/shardingsphere/issues/1617

13. In Windows environment,when cloning ShardingSphere source code through Git, why prompt filename too long and how to solve it?

Answer:

To ensure the readability of source code,the ShardingSphere Coding Specification requires that the naming of classes,methods and variables be literal and avoid abbreviations,which may result in Some source files have long names.

Since the Git version of Windows is compiled using msys,it uses the old version of Windows Api,limiting the file name to no more than 260 characters.

The solutions are as follows:

Open cmd.exe (you need to add git to environment variables) and execute the following command to allow git supporting log paths:

git config --global core.longpaths true

If we use windows 10, also need enable win32 log paths in registry editor or group strategy(need reboot):

Create the registry key

HKLM\SYSTEM\CurrentControlSet\Control\FileSystem LongPathsEnabled(Type: REG_DWORD) in registry editor, and be set to 1. Or click “setting” button in system menu, print “Group Policy” to open a new window “Edit Group Policy”, and then click ‘Computer Configuration’ > ‘Administrative Templates’ > ‘System’ > ‘Filesystem’, and then turn on ‘Enable Win32 long paths’ option.

Reference material:

https://docs.microsoft.com/zh-cn/windows/desktop/FileIO/naming-a-file https://ourcodeworld.com/articles/read/109/how-to-solve-filename-too-long-error-in-git-powershell-and-github-application-for-windows

14. In Windows environment, could not find or load main class org.apache.shardingsphere.proxy.Bootstrap, how to solve it?

Answer:

Some decompression tools may truncate the file name when decompressing the ShardingSphere-Proxy binary package, resulting in some classes not being found.

The solutions:

Open cmd.exe and execute the following command:

tar zxvf apache-shardingsphere-${RELEASE.VERSION}-shardingsphere-proxy-bin.tar.gz

15. How to solve Type is required error?

Answer:

In Apache ShardingSphere, many functionality implementation are uploaded through SPI, such as Distributed Primary Key. These functions load SPI implementation by configuring the type,so the type must be specified in the configuration file.

16. Why does my custom distributed primary key do not work after implementing ShardingKeyGenerator interface and configuring type property?

Answer:

Service Provider Interface (SPI) is a kind of API for the third party to implement or expand. Except implementing interface, you also need to create a corresponding file in META-INF/services to make the JVM load these SPI implementations.

More detail for SPI usage, please search by yourself.

Other ShardingSphere functionality implementation will take effect in the same way.

17. How to solve that data encryption can’t work with JPA?

Answer:

Because DDL for data encryption has not yet finished, JPA Entity cannot meet the DDL and DML at the same time, when JPA that automatically generates DDL is used with data encryption.

The solutions are as follows:

- Create JPA Entity with logicColumn which needs to encrypt.

- Disable JPA auto-ddl, For example setting auto-ddl=none.

- Create table manually. Table structure should use

cipherColumnandassistedQueryColumnto replace the logicColumn.

18. How to speed up the metadata loading when service starts up?

Answer:

- Update to 4.0.1 above, which helps speed up the process of loading table metadata from

the default dataSource. - Configure:

max.connections.size.per.query(Default value is 1) higher referring to connection pool you adopt(Version >= 3.0.0.M3 & Version < 5.0.0).max-connections-size-per-query(Default value is 1) higher referring to connection pool you adopt(Version >= 5.0.0).

19. How to allow range query with using inline sharding strategy(BETWEEN AND, >, <, >=, <=)?

Answer:

- Update to 4.1.0 above.

- Configure(A tip here: then each range query will be broadcast to every sharding table):

- Version 4.x:

allow.range.query.with.inline.shardingtotrue(Default value isfalse). - Version 5.x:

allow-range-query-with-inline-shardingtotruein InlineShardingStrategy (Default value isfalse).

20. Why there may be an error when configure both shardingsphere-jdbc-spring-boot-starter and a spring-boot-starter of certain datasource pool(such as druid)?

Answer:

- Because the spring-boot-starter of certain datasource pool (such as druid) will be configured before shardingsphere-jdbc-spring-boot-starter and create a default datasource, then conflict occur when ShardingSphere-JDBC create datasources.

- A simple way to solve this issue is removing the spring-boot-starter of certain datasource pool, shardingsphere-jdbc create datasources with suitable pools.

21. How to add a new logic schema dynamically when use ShardingSphere-Proxy?

Answer:

- Before version 4.1.0, sharing-proxy can’t support adding a new logic schema dynamically, for example, when a proxy starting with two logic schemas, it always hold the two schemas and will be notified about the table/rule changed events in the two schemas.

- Since version 4.1.0, sharing-proxy support adding a new logic schema dynamically via ShardingSphere-UI or zookeeper, and it’s a plan to support removing a exist logic schema dynamically in runtime.

22. How to use a suitable database tools connecting ShardingSphere-Proxy?

Answer:

- ShardingSphere-Proxy could be considered as a mysql sever, so we recommend using mysql command line tool to connect to and operate it.

- If users would like use a third-party database tool, there may be some errors cause of the certain implementation/options. For example, we recommend Navicat with version 11.1.13(not 12.x), and turn on “introspect using jdbc metadata”(or it will get all real tables info from informations_schema) in idea or datagrip.

23. Found a JtaTransactionManager in spring boot project when integrating with ShardingTransaction of XA

Answer:

shardingsphere-transaction-xa-coreinclude atomikos, it will trigger auto-configuration mechanism in spring-boot, add@SpringBootApplication(exclude = JtaAutoConfiguration.class)will solve it.

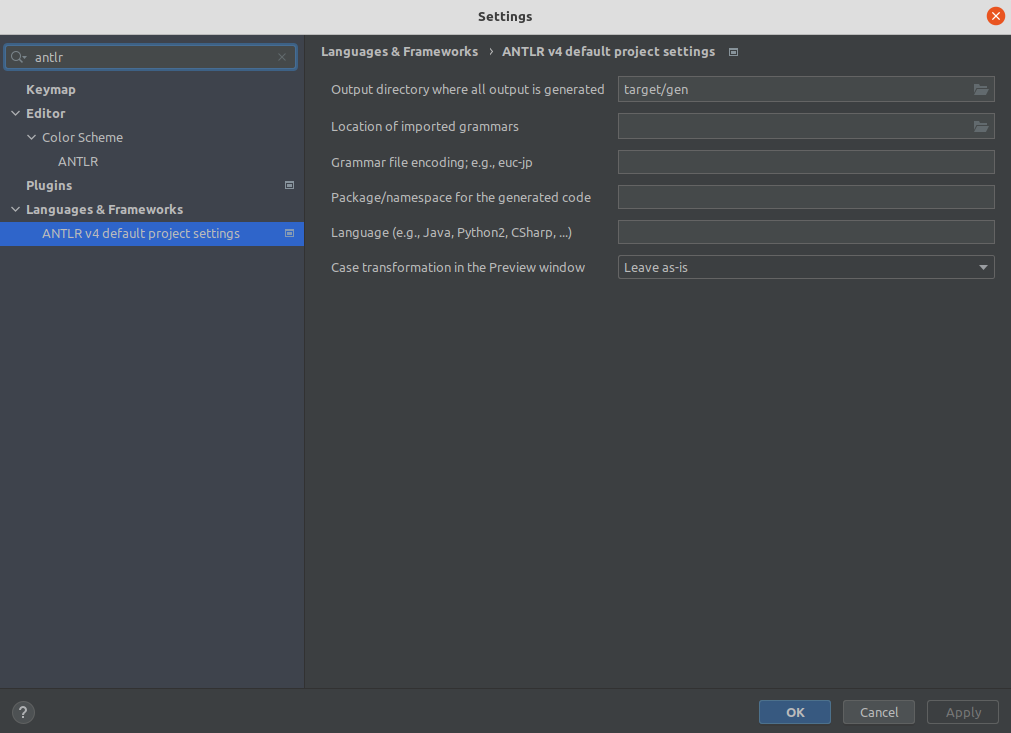

24. The ANTLR plugin generates codes in the same level directory as src, which is easy to commit by mistake. How to avoid it?

Answer:

Goto Settings -> Languages & Frameworks -> ANTLR v4 default project settings and configure the output directory of the generated code as target/gen as shown: