For an introduction to Terraform, please refer to this link.

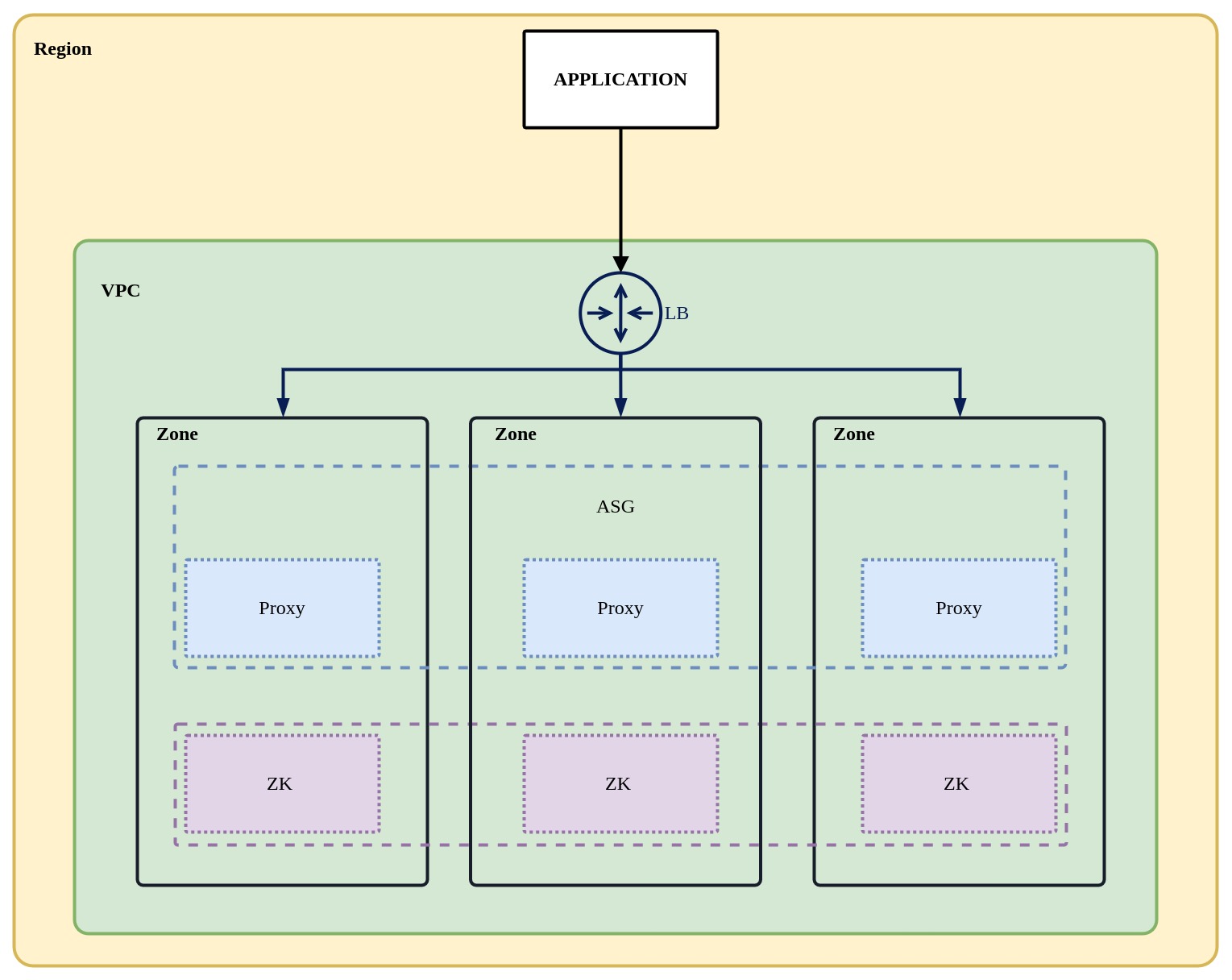

You can use Terraform to create a ShardingSphere high availability cluster on Huawei Cloud. The cluster architecture is shown below. More cloud providers will be supported in the near future.

The HuaweiCloud resources created are the following:

To create a ShardingSphere Proxy highly available cluster, you need to prepare the following resources in advance:

terraform.tfvars file according to the above prepared resources.git clone --depth=1 https://github.com/apache/shardingsphere-on-cloud.git

cd shardingsphere-on-cloud/terraform/huawei

The terraform.tfvars sample content is as follows:

shardingsphere_proxy_version = "5.3.1"

image_id = ""

key_name = "test-tf"

flavor_id = "c7.large.2"

vpc_id = "4b9db05b-4d57-464d-a9fe-83da3de0a74c"

vip_subnet_id = ""

subnet_ids = ["6d6c57ed-5284-4a7b-b0e3-0b24aa6c9552"]

security_groups = ["f5ad3525-dc9e-482e-afde-868ee330e7a5"]

lb_listener_port = 3307

zk_flavor_id = "s6.medium.2"

export HW_ACCESS_KEY="AK"

export HW_SECRET_KEY="SK"

export HW_REGION_NAME="REGION"

huawei directory, run the following command to deploy ShardingSphere-Proxy Cluster.terraform init

terraform plan -var-file=terraform.tfvars

terraform apply -var-file=terraform.tfvars

| Name | Version |

|---|---|

| huaweicloud | 1.43.0 |

| Name | Source | Version |

|---|---|---|

| zk | ./modules/zk | n/a |

| Name | Type |

|---|---|

| huaweicloud_as_configuration.ss | resource |

| huaweicloud_as_group.ss | resource |

| huaweicloud_dns_recordset.ss | resource |

| huaweicloud_dns_zone.private_zone | resource |

| huaweicloud_elb_listener.ss | resource |

| huaweicloud_elb_loadbalancer.ss | resource |

| huaweicloud_elb_monitor.ss | resource |

| huaweicloud_elb_pool.ss | resource |

| huaweicloud_availability_zones.zones | data source |

| huaweicloud_images_image.myimage | data source |

| huaweicloud_vpc_subnet.vipnet | data source |

| Name | Description | Type | Default | Required |

|---|---|---|---|---|

| flavor_id | The flavor id of the ECS | string |

n/a | yes |

| image_id | The image id | string |

"" |

no |

| key_name | the ssh keypair for remote connection | string |

n/a | yes |

| lb_listener_port | The lb listener port | string |

n/a | yes |

| security_groups | List of The Security group IDs | list(string) |

[] |

no |

| shardingsphere_proxy_as_desired_number | The initial expected number of ShardSphere Proxy Auto Scaling. The default value is 3 | number |

3 |

no |

| shardingsphere_proxy_as_healthcheck_grace_period | The health check grace period for instances, in seconds | number |

120 |

no |

| shardingsphere_proxy_as_max_number | The maximum size of ShardingSphere Proxy Auto Scaling. The default value is 6 | number |

6 |

no |

| shardingsphere_proxy_doamin_prefix_name | The prefix name of the shardinsphere domain, the final generated name will be [prefix_name].[zone_name], the default value is proxy. | string |

"proxy" |

no |

| shardingsphere_proxy_version | The shardingsphere proxy version | string |

n/a | yes |

| subnet_ids | List of subnets sorted by availability zone in your VPC | list(string) |

n/a | yes |

| vip_subnet_id | The IPv4 subnet ID of the subnet where the load balancer works | string |

"" |

no |

| vpc_id | The id of your VPC | string |

n/a | yes |

| zk_cluster_size | The Zookeeper cluster size | number |

3 |

no |

| zk_flavor_id | The ECS instance type | string |

n/a | yes |

| zk_servers | The Zookeeper servers | list(string) |

[] |

no |

| zone_id | The id of the private zone | string |

"" |

no |

| zone_name | The name of the private zone | string |

"shardingsphere.org" |

no |

| Name | Description |

|---|---|

| shardingsphere_domain | The domain name of the ShardingSphere Proxy Cluster for use by other services |

| zk_node_domain | The domain of zookeeper instances |

By default, ZooKeeper and ShardingSphere Proxy services created using our Terraform configuration can be managed using systemd.

systemctl start zookeeper

systemctl stop zookeeper

systemctl restart zookeeper

systemctl start shardingsphere-proxy

systemctl stop shardingsphere-proxy

systemctl restart shardingsphere-proxy